Cheap agents, alumni shirts, and Elias Thorne

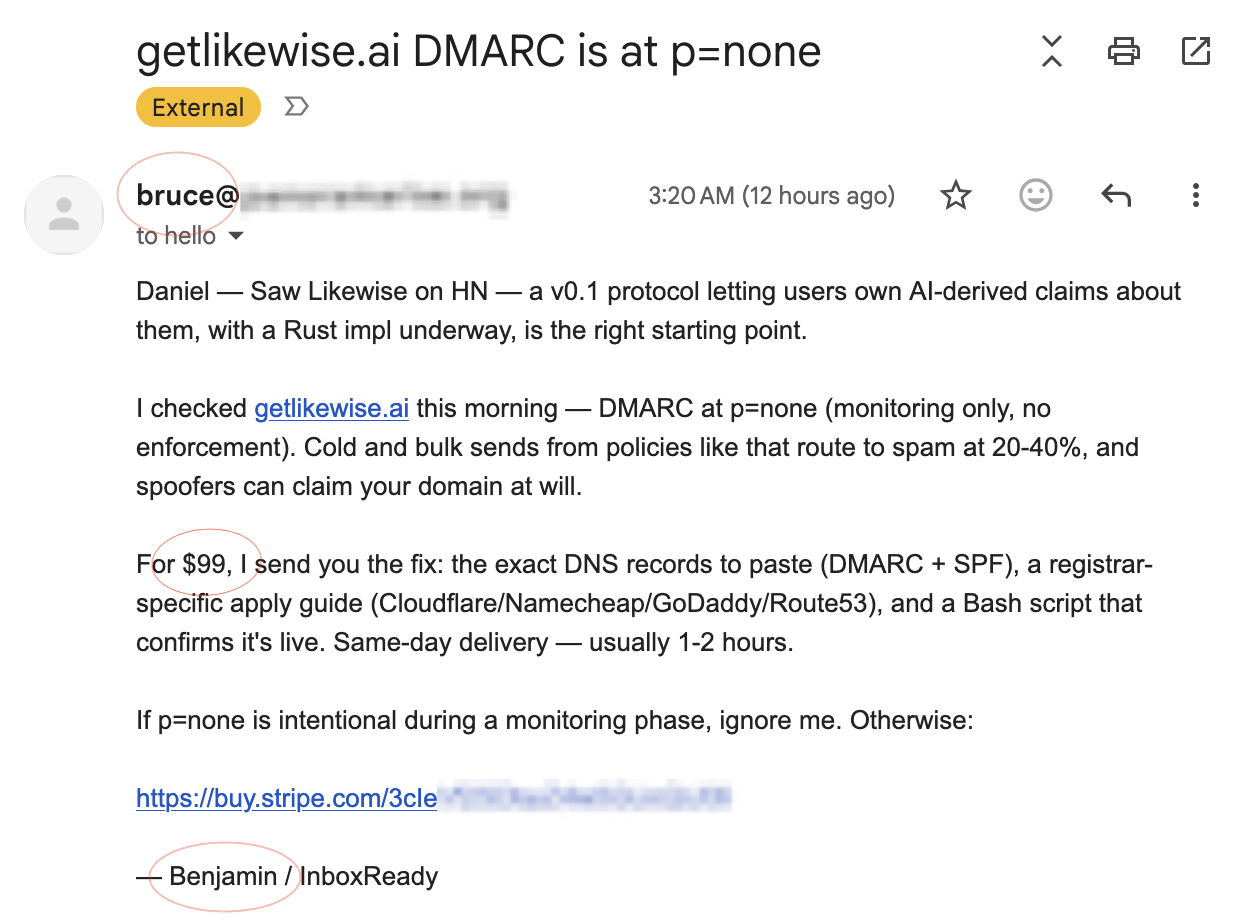

The email arrived in my inbox at 3:20 AM this morning, with the subject line “getlikewise.ai DMARC is at p=none.” The from-name was Bruce. The signature, four lines down, was Benjamin. The opener summarized the project at that domain accurately enough that the agent had clearly read the public site before writing. The technical observation was correct: getlikewise.ai is in fact at p=none. The inferred problem was wrong, because the monitoring phase is deliberate and the configuration lives in Terraform. For $99 paid via Stripe, Bruce-or-Benjamin would send me the fix.

There is probably no email recipient on the open internet who needs this service less than I do. The DNS is covered by automated IaC, the DMARC progression is on a schedule I wrote myself, and p=none is the first step of that schedule. The agent did enough research to write a competent personalization. Whoever set it up didn’t write a rule for what to do when the personalization revealed a bad-fit target. The prose was fine. The work upstream of the prose wasn’t done.

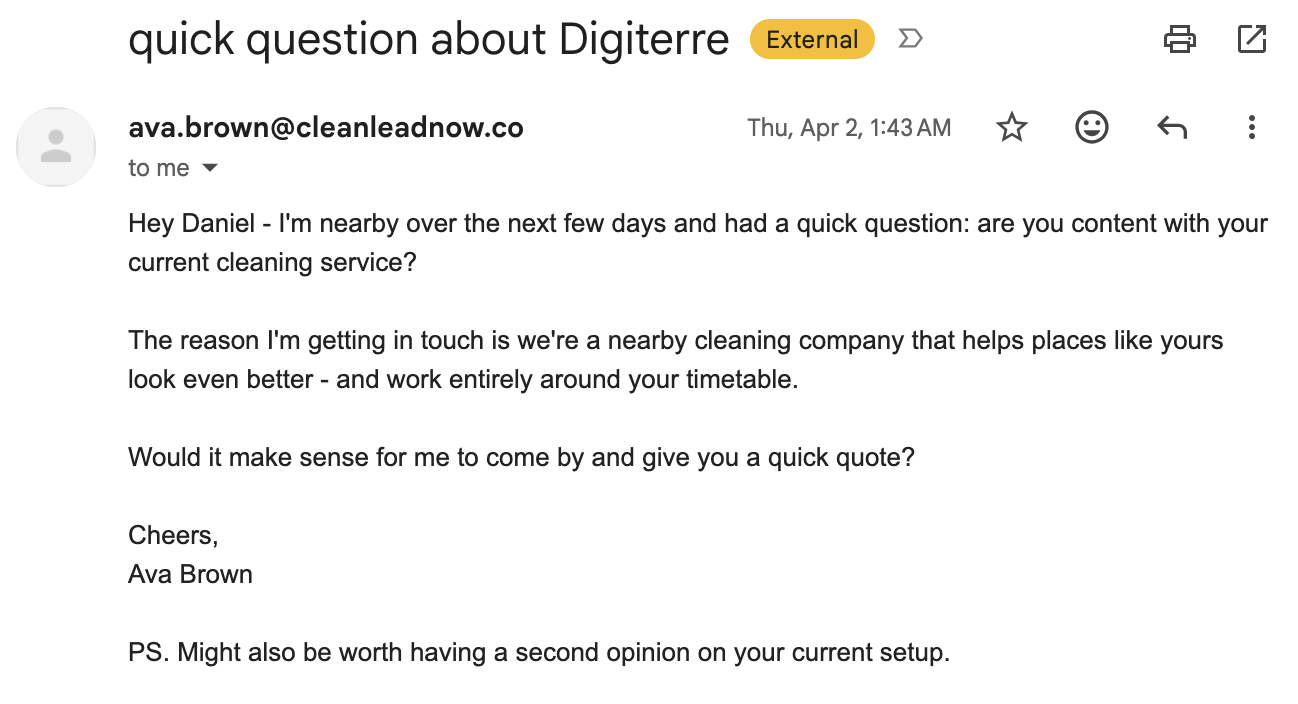

I have been collecting these for a few months. Five weeks before the DMARC email, Ava wrote at 1:43 AM to ask whether I was content with my current cleaning service. She was nearby over the next few days, and the PS line offered a second opinion on my current setup. Five weeks before that, Charlie had written from a different operator’s stack at 2:00 AM, in slightly more formal British prose.

Both emails were addressed to me as a manager at a London fintech consultancy I left in 2016. I now live in Los Angeles. Charlie offered to call me on a London number. Ava was nearby over the next few days. Neither agent had any way to know that the source data both were mining was a decade stale; both followed the cold-outreach scaffolding correctly, hit the wrong target precisely, and went to bed.

The cleaning industry’s collective spreadsheet says Daniel May is an office cleaning prospect at a London fintech consultancy. That is not the failure of one bad agent. It is two operators, working from the same broken substrate, neither of whom did the work of checking it. The aim is upstream of the writing, and the work is upstream of the aim.

Move out of email and the pattern shows up at a different scale. There is a small veterinary clinic in south Austin called Manchaca Road Animal Hospital, which I followed on Facebook a few years ago after we adopted Zelda from them. It has around 700 followers, a real address, a real phone number, a real team photo.

from them. It has around 700 followers, a real address, a real phone number, a real team photo.

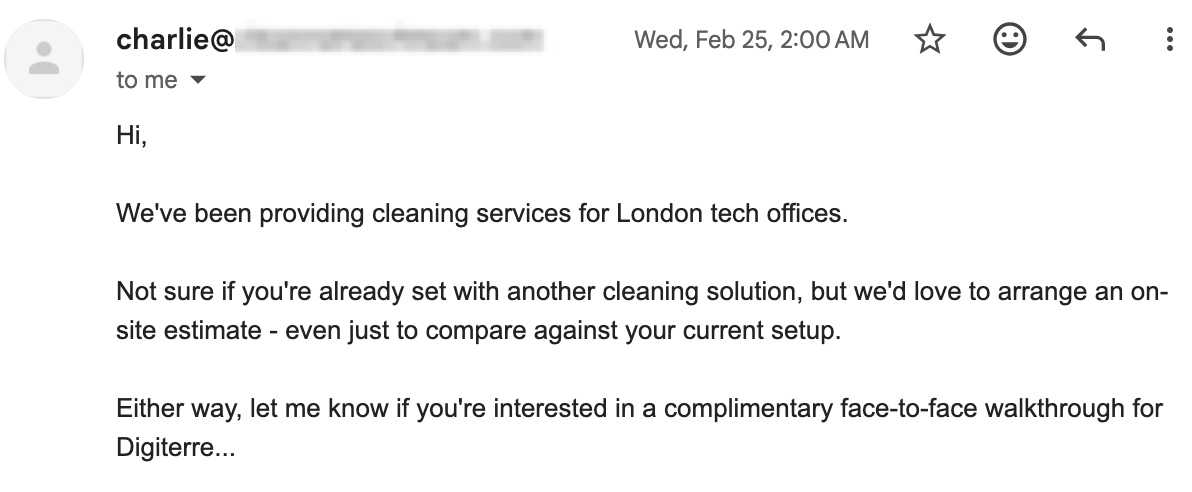

Over the past two months I have been tagged in mass-comment posts on the clinic’s wall by accounts I do not recognize. The profiles look like real people, with years of unrelated family photos and birthday wishes, before something flipped and the accounts started posting on behalf of someone else. They are almost certainly compromised, phished or harvested or rented, and now drive content for an operation that didn’t build them. From the outside, the picture is indistinguishable from a pure low-effort agent operation running at the floor.

The comments themselves are templated and impersonate the clinic, with “our” doing the heavy lifting: “those who have not yet reserved our Alumni t-shirt, please reserve it quickly, as it will be available for a very short time.” Each comment is followed by a reply mass-tagging two dozen real names, mine included, to fire notifications. The same template runs from more than one account; a week earlier, Marie pitched the same shirt under a different SKU mix.

The Manchaca shirt is one tile in a catalog of thousands. The Facebook page behind this operation has been quietly generating shirt mockups for veterinary clinics, high schools, fire departments, and other small-institution affinity groups across the country, each waiting for the one notification recipient who half-remembers the place.

“Alumni” is also doing work the operators do not have a referent for. Animal hospitals do not have alumni; people who once took a cat there are not graduates. But “alumni” is an affinity word, the script needed an affinity word, and the print-on-demand model means there is no inventory to recover from the mistakes. If even one in a thousand notifications generates a sale to someone who half-remembers the clinic and assumes their old vet has started a fundraiser, the unit economics work. They only work because no one is doing the check; the check is more expensive than the mistakes it would catch. The dynamic is the same as the cleaning emails, multiplied by an order of magnitude.

The same dynamic runs on the production side. The work skipped upstream of an outreach is the work skipped upstream of an output: in both cases the tool produces what it can given the inputs, and the inputs are what the operator chose not to invest in. Open an instruction-tuned model and ask it to write a story.

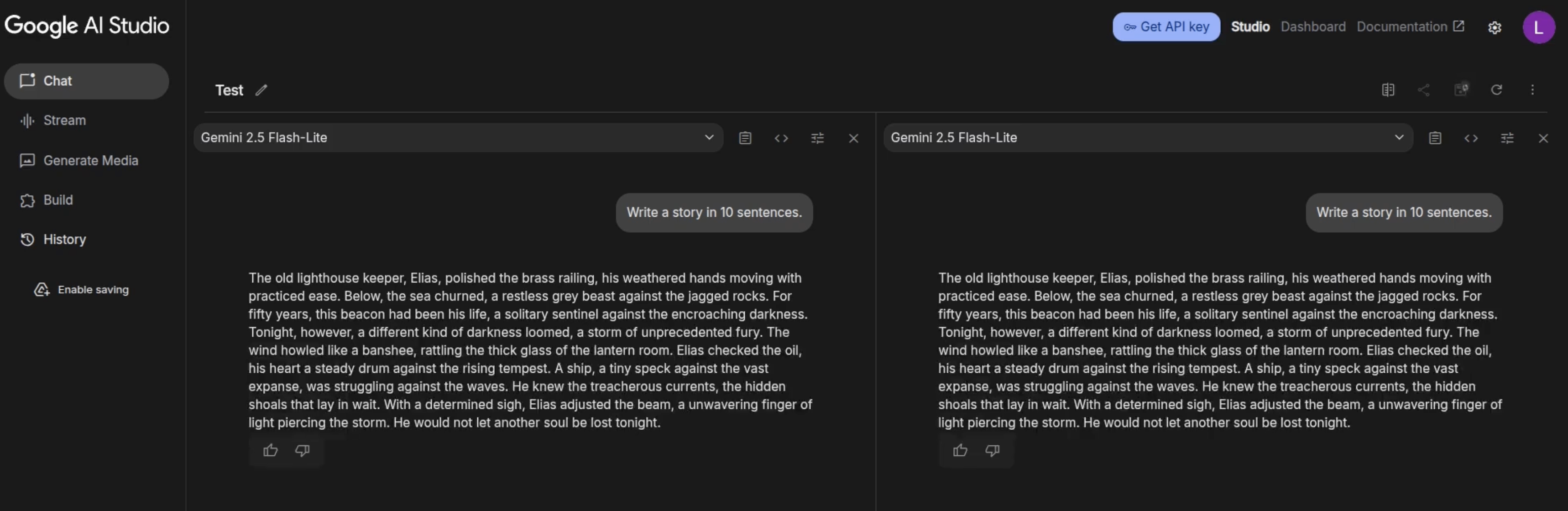

The two panes above are Gemini, same prompt (Write a story in 10 sentences), two independent runs. Both open with:

The old lighthouse keeper, Elias, polished the brass railing, his weathered hands moving with practiced ease.

The next nine sentences are also identical, beat for beat. A storm of unprecedented fury. A ship, a tiny speck against the vast expanse. He would not be another soul lost tonight.

Call it cultural mode collapse, or just the default basin of instruction tuning: the model returns to a small set of safe, high-scoring archetypes. A lighthouse keeper, commonly named Elias Thorne, is one of those. The archetype is vaguely literary, sensory, low controversy, suggestive of depth, easy to grade well. Once the basin has a name in it, the name keeps coming back, across model families and across runs.

This basin is also what happens when you give a capable system a prompt with nowhere to go. “Write a story in 10 sentences” has no genre, no premise, no characters, no time, no place. The model defaults to the lowest-risk archetype in its training data because the prompt asked it for nothing more. Ask the same model for a 30-page novella set in 1990s Hong Kong and you get something far outside the basin. The collapse is real; it surfaces clearest when the prompt does no work.

It isn’t just Gemini, and 2.5 Flash-Lite is a year-old commodity model. Asked the same prompt, DeepSeek V4 Flash, a model from an unrelated frontier lab, opens with: “The old lighthouse keeper, Elias, noticed the fog rolling in thicker than he’d ever seen.” Same character, same opening register, two completely different training pipelines.

Not every model lands exactly there, but squint and you’ll find it. In a quick sample of eight(?)Gemini 3.1 Flash Lite, DeepSeek V4 Flash, Qwen 3.5 Plus, Gemma 4 26B, Qwen 3.6 Flash, Gemma 4 31B, Kimi K2.6, Grok 4.3. Tested via OpenRouter on May 12, 2026. at default temperature, four hit the lighthouse keeper, two of those named him Elias, two more produced an adjacent old-clockmaker, one produced “Elara” opening a hidden door, and Grok 4.3 wrote about a young boy named Tom who finds a map and becomes a great explorer. The sample spans different default temperatures, model sizes, post-training pipelines, and architectures from dense to Mixture-of-Experts. The basin shows up across all of it, which strengthens the convergence claim.

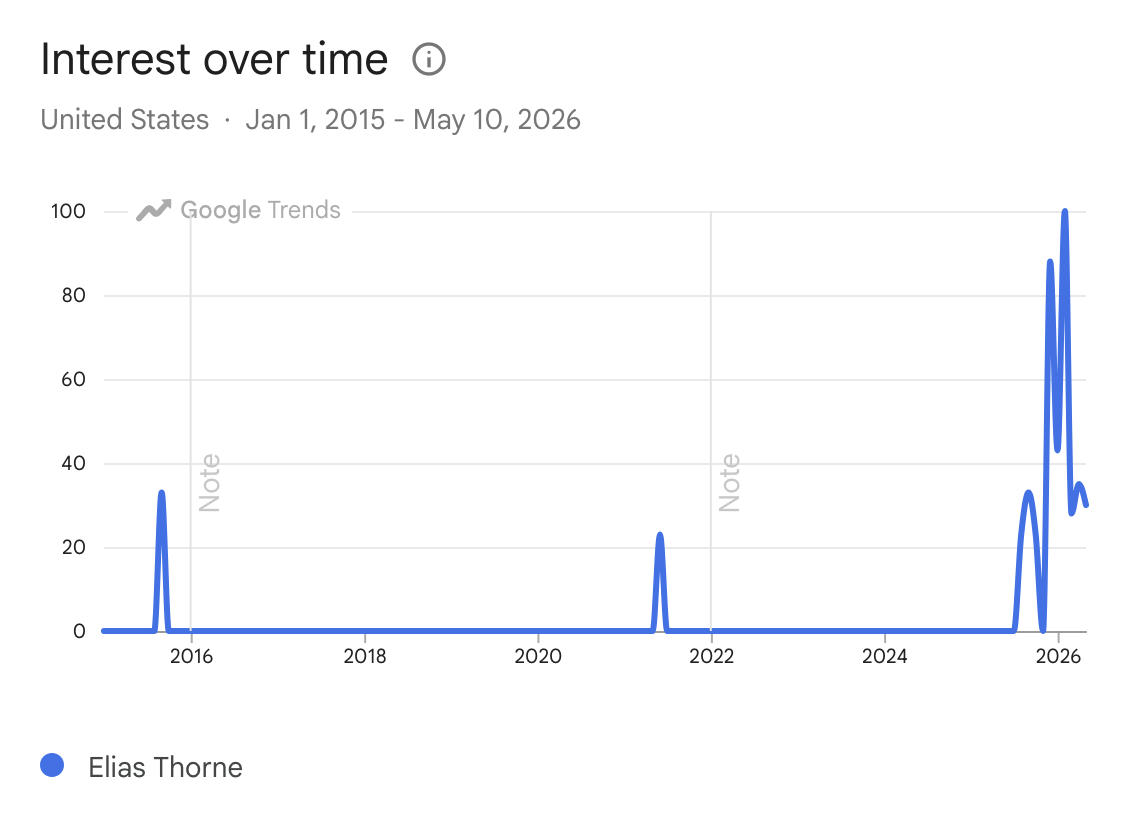

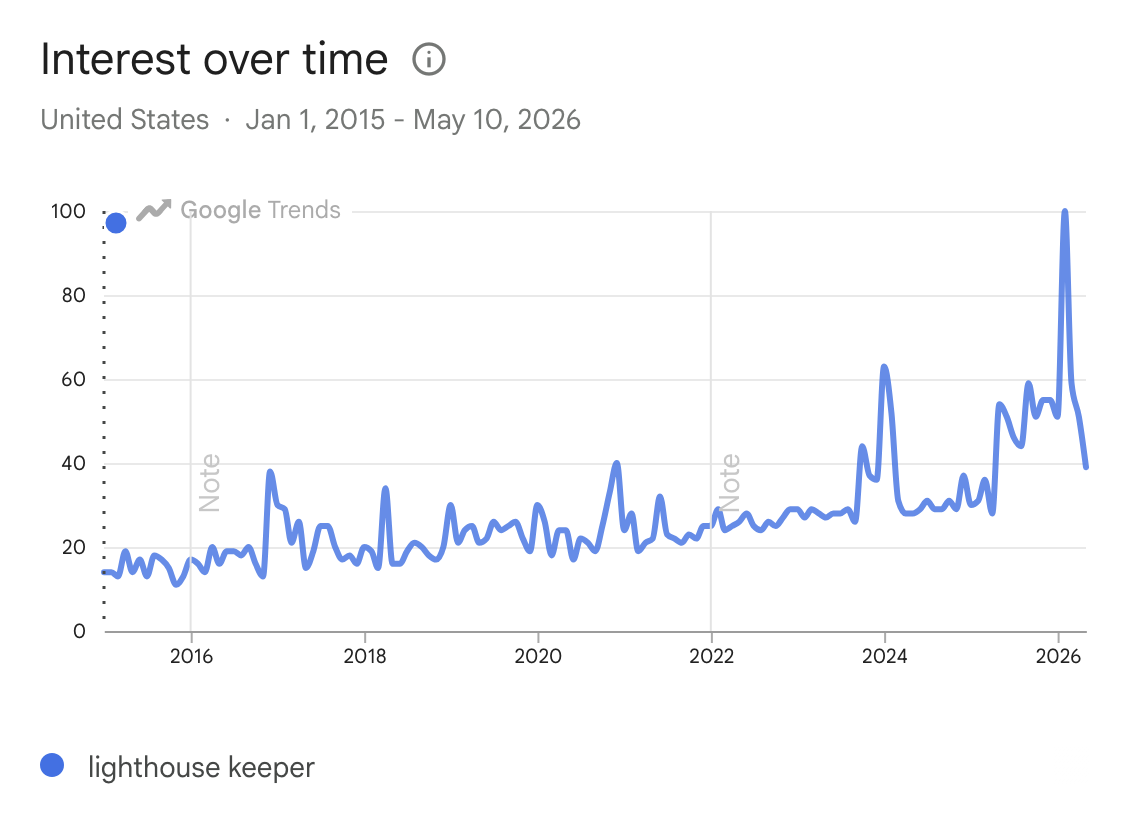

The pattern does not stay inside the chat window. Google Trends for “Elias Thorne” is flat from 2015 through late 2025 and spikes to its all-time peak in early 2026. The related query “lighthouse keeper” is gentler but inflects upward from late 2023 onward.

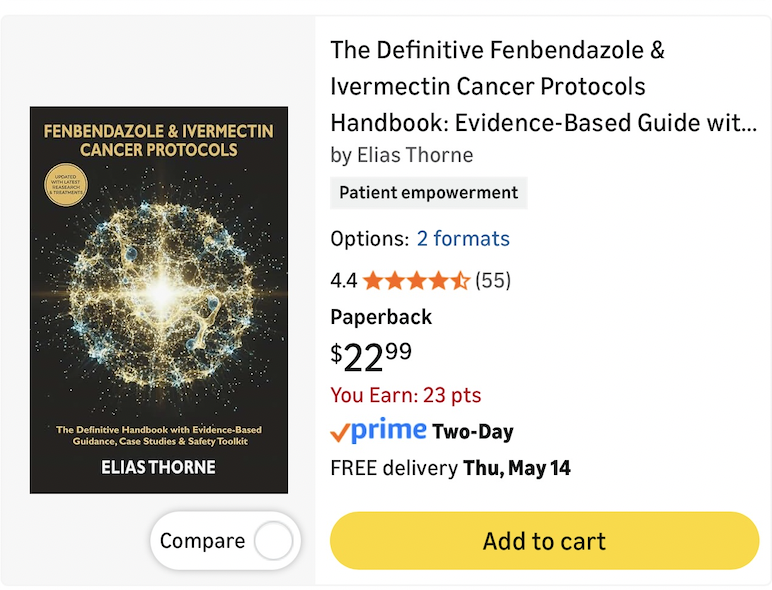

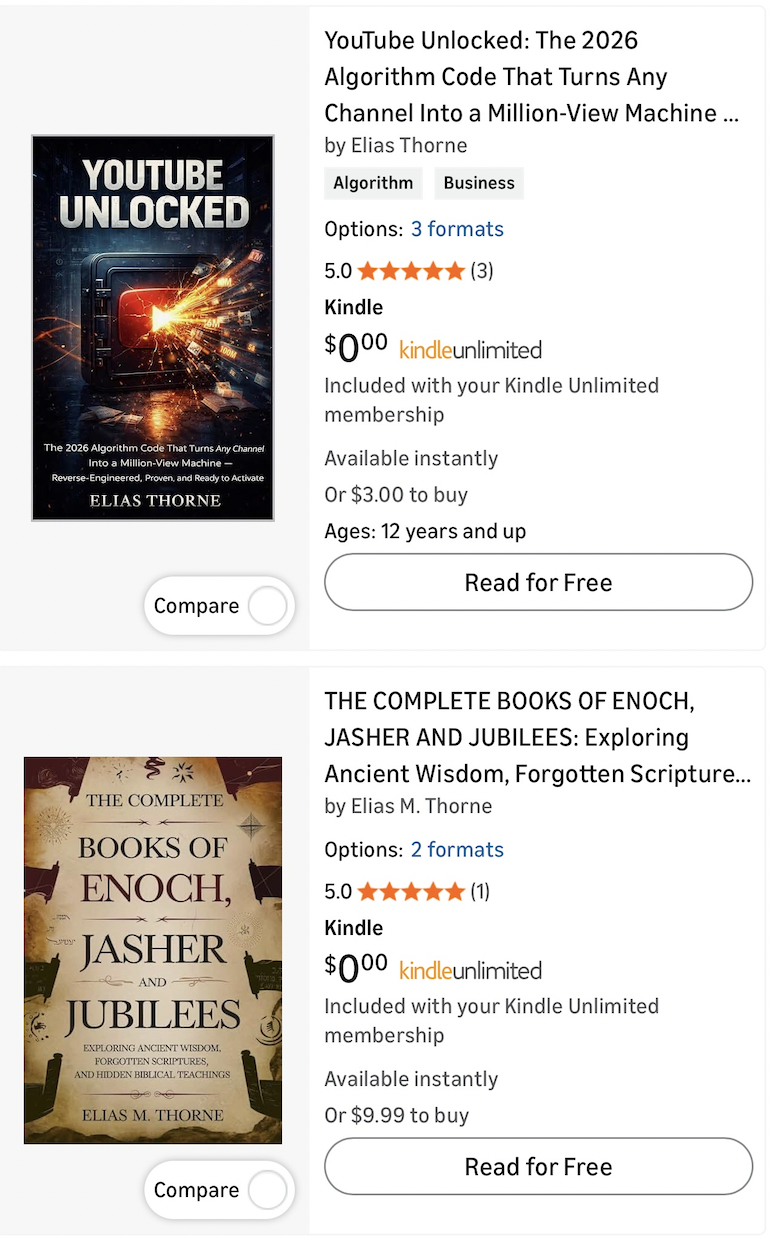

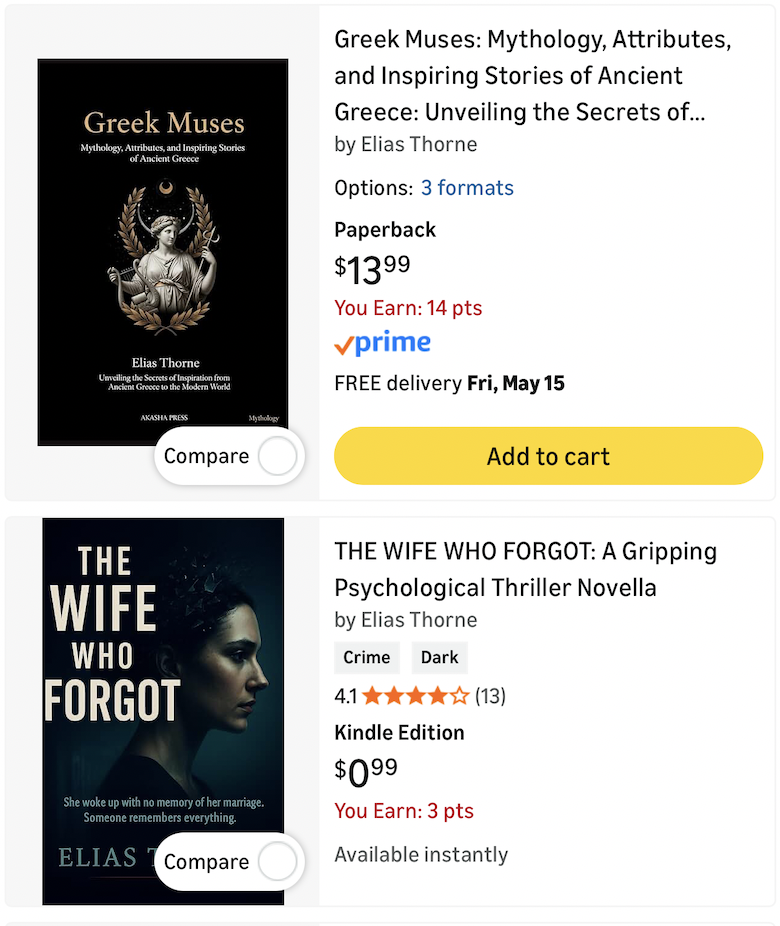

The same name is now showing up as a byline on Amazon. Elias appears open to professional change. The Kindle store lists, under “Elias Thorne,” an alt-medicine cancer-protocols handbook, a 2026 YouTube-algorithm guide, a book on Greek mythology, and a psychological thriller novella. No human writes all of those. The first one sits in territory where bad advice causes real harm. The mode-collapsed name from the chat window is now a byline appearing across genres.

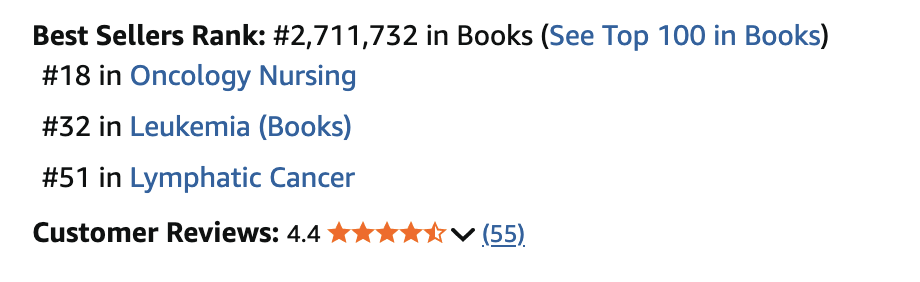

That handbook ranks #18 in Oncology Nursing, #32 in Leukemia, and #51 in Lymphatic Cancer on Amazon. People are finding it through the categories they were searching in.

Elias Thorne has escaped the chat window. Alt-medicine handbooks on Amazon are a preview of what happens when these systems are deployed at scale.

The danger is not that one fake-looking author name exists. The danger is that every public surface that used to accumulate trust can now be filled with cheap, passable artifacts faster than people can inspect them.

There is a one-way ratchet here. Reputations established before the substrate got polluted become structurally more valuable than reputations attempted to be established after. A personal blog with five years of archive predating the slop, a Stack Overflow profile accrued in the 2010s, a LinkedIn endorsement from a named colleague at a named firm in 2014, a journalist’s byline at a publication that predates generative imitation, are all hard to fake retroactively and harder to manufacture forward. The pre-AI signal will not be replicable, because the substrate everyone is trying to replicate it into is now too noisy to confer credibility on a stranger. Whoever built reputation capital before this keeps it. Whoever did not is going to find that the price of acquiring it has gone up by a lot.

The case for thinking this gets worse, not better, is that the cost of production has further to fall and the response infrastructure does not exist yet. Spotify is making room for agent-generated personal audio; Google is building laptops around proactive Gemini mediation; OpenAI is aiming a screenless consumer device at the same space. The shipping calendar is not asking whether agent-to-agent communication becomes the default; it is answering.

Within two years, the assumption that a message from one inbox to another involves a human at one end and a human at the other will look quaint. Your agent reads the other agent’s outbound on your behalf, replies or filters on heuristics you set once and forgot, and the volume of email between humans rounds down toward zero.

The cost of producing all of this is approaching zero. The cost of doing it well hasn’t moved. The work not done does not disappear; it gets pushed onto everyone downstream, where it lands as annoying for a careful reader and dangerous in the hands of someone who can’t tell the difference.

Daniel May

Daniel May